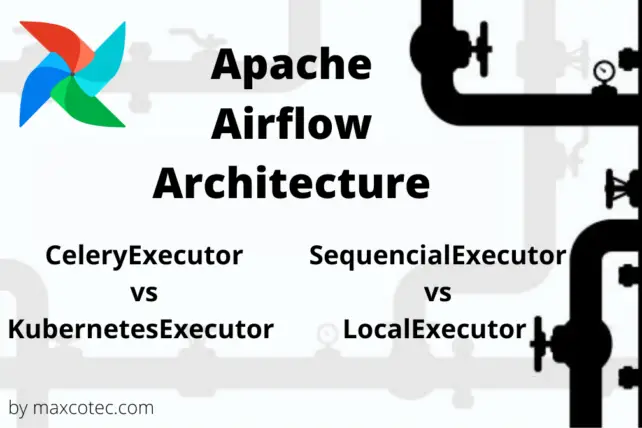

If you are reading this article, you must already be familiar with what Apache Airflow is and why and when its needed. If not, then I’d highly recommend you to read previous articles first 1. Apache Airflow Introduction and 2. Apache Airflow Architecture

In this article, we’ll be demonstrating how you can spin-up your own Apache Airflow instance on your local machine using Docker. This minimal setup will consist of airflow simplest configurations using sequential executor and SQLite database. The aim of this tutorial is to help you quickly get started with the airflow so that you can play around with it, create your dags, experiment and learn how airflow works. So let’s get started.

Watch this article on YouTube

Requirements

Docker

In order to keep the setup easy and quick, we will be using running and deploying Apache Airflow with Docker. Docker is a containerized solution that allows you to create a compact virtual environment which is very easy to spin up and it is cost effective and you can fastly deploy it on any of your environment. So you don’t have to install all of the dependencies on your local machine.

First off, make sure you have Docker installed on your machine. Head over to docker getting started and download for your desired operating system. Once installed, open the terminal and type command `Docker version` to make sure it’s installed properly. Mine is `20.9.7 f0df350`

Docker-Compose

Next up is `docker-compose`, which is an orchestration system for Docker that runs and manages multiple containers. This tool makes it easy to allow containers to talk with each other by creating dedicated network (More on this later). This tool usually comes with Docker but if it doesn’t, installed it from here. Validate after installing by typing command `docker-compose –version`. Mine is `1.29.2 build 5becea4c`

Python3

Make sure you have Python 3 installed. The exact version that we will be using is 3.8.

Apache Airflow Docker compose file

Download the docker-compose file from here

NOTE: This article focuses on airflow sequential executor using SqLite database. Whereas the code for same using MySql

as well as airflow LocalExecutoris is also available on here

We are defining containers in this yaml file under section named `services`. Each container runs desired airflow service namely, scheduler, webserver, and mysql database. let me walk you through core components of this yaml file and make you familiarize with the syntax. First off, lets have a look at common steps used multiple times across docker-compose file;

<<: *airflow-common

You will find this as the first step defined in every service. The sign `<<` is called YAML anchor format, which is used to reference a content declaration. We are defining this content `airflow-common` on top starting with & symbol. This syntax helps reduce yaml code by reusing the content that can be shared across multiple times. Under `airflow-common`, we are defining configurations used by all of the services.

- image: Here we define container image `apache/airflow:2.1.1-python3.8`

- environment variables: here we can overwrite default airflow configs https://github.com/apache/airflow/blob/main/airflow/config_templates/default_airflow.cfg. Following syntax AIRFLOW__<yaml_section_name>__<variable_name>. All environment variables defined here are also defined under yaml anchor airflow-common-env, which we will be using in rest of the container definitions.

- volumes: defines a persistence layer for containers. In this case, we are creating 4 volumes, dags & plugins (from where airflow picks up the dag and plugins code), logs (where airflow persist logs) and db (where airflow creates and stores sqlite instance and metadata)

- user: All docker container users needs to have same permission as of our local machine. this is only required if you are in linux or mac

- depends_on: adds a dependency of an existing container. When using Local Executor, where we spin up a dedicated container for MySql service, all rest of the container can only start executing when mysql container is up.

Containers

airflow-init

First executes all steps mentioned from airflow-common. The default entrypoint command used in airflow image is `airflow`, which we are going to overwrite with custom bash scripts. First two are to initialize airflow database `airflow db init` and `airflow db upgrade`. And finally we create an admin user using `airflow users create` command. This is the best place to add other initialization commands like setting up custom connections, secrets adding new role etc.

airflow-scheduler:

This container will start the heart component of airflow called the scheduler. We simply run command `scheduler`. The container restart policy is set to always, so docker-compose will take care of restarting this container in event of any failure.

airflow-webserver:

This container will start the airflow webserver by running command `webserver`. We are also exposing the internal container port 8080 to the host machine on 8081. Healthcheck allows docker-compose to determine the health of the container by running given command in section test.

Set User permissions (Mac and Linux users only)

Mac and linux users need to export some environment variables. Run the following command on terminal

echo –e “AIRFLOW_UID=$(id -u)\nAIRFLOW_GID=0” > .env

This creates a .env file which will be loaded into all containers. This take cares of making sure that the permissions of the user are exactly same between host directory volumes and the container directories.

Spin-up Apache Airflow Docker containers

NOTE: Before running docker-compose, make sure you have enough memory allocated to Docker Host machine, or else it might lead to airflow webserver continuously restarting and slow down scheduler. On Mac, You should at least allocate 4GB memory for the Docker Engine (ideally 8GB). You can check and change the amount of memory here https://docs.docker.com/desktop/mac/

The first container that needs to run is airflow-init, which is going to setup the database and create admin user. Run following command;

docker-compose up airflow-init

This commands will take few minutes to finish. Once completed, now its time to run all containers. Run following command

docker-compose up

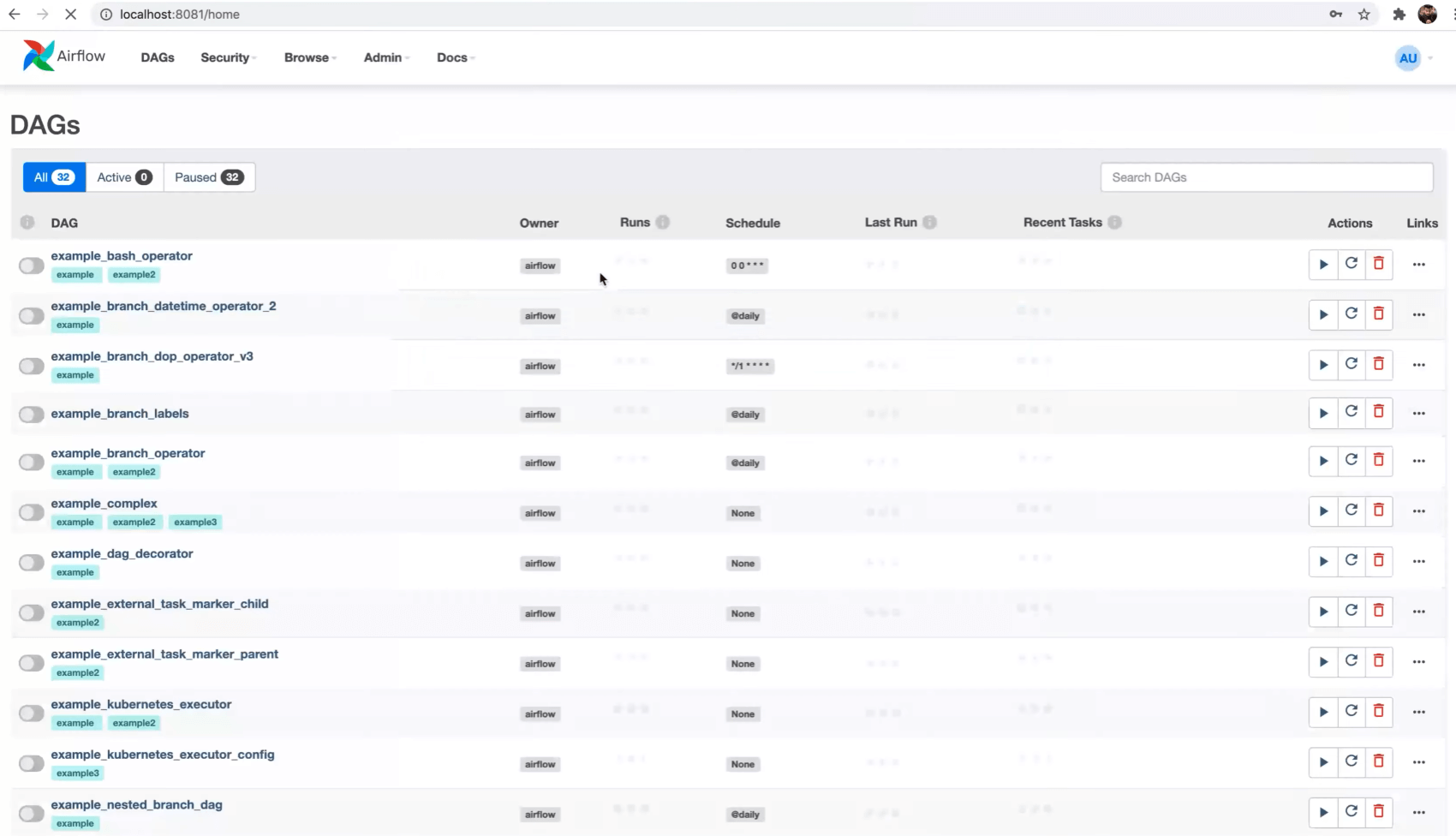

If everything runs successfully, you should now be able to access airflow webserver on localhost:8081. Use admin user credentials (created in airflow-init step) to login to the webserver. After successful login, you will be redirected to the webserver UI landing page seen as follows;

Conclusion:

Finally you now have a full fledge Apache Airflow Docker instance running on your local machine. you can add your own dag into this airflow instance by simply placing the DAG code under dags/ directory. Until our next section, where we will be building our very own first DAG based on real-world example, learn and explore each and every feature yourself by creating and breaking things “Fake it till you make it”.

This article is also available in our YouTube video, so if you’ve missed any nitty gritty detail here, it will definitely be covered in the video.